What Percentage Of American Women Hold Jobs?

- From 1990 to 2019, between 53% and 58% of females 16 years and older in the US held paid jobs.

- More women than men have attained degrees in higher education in recent years, a large contrast to the days in which women were not allowed in many US universities such as Yale and Harvard.

- In 1890 just 5% of doctors in the US were women, a percentage that rose to 17% by the 1980s.

At one point in America’s history, the vast majority of women were homemakers, spending their days taking care of children at home, and tending to the needs of the “homestead”. From sewing clothes for the family to cleaning the home, washing clothing and bedding, cooking food, making foods like butter, teaching children to read, and tending to the family’s gardens, these women were certainly working hard, but they were not paid for their efforts.

Today, times have changed, at least in the US. Many women in the country and in other places around the world now hold paid positions in various fields outside the home. A survey done by Statista.com showed that from 1990 to 2019, between 53% and 58% of females 16 years and older in the US held paid jobs. In fact, women have now surpassed men in the work world. According to the US government’s December 2019 jobs report, women now occupy just slightly over half, 50.04%, of all job positions in the country. Women are still fighting for wage equality in the Western world, but the advancements that have been made, like having equal access to a university education, are something to be celebrated.

How did time lead us to where we now are, and what are women being paid to do? Here is a quick look.

A Brief History Of Working Women In The US

The US is known for its freedoms and equality, but in terms of women in the workplace, it has been a struggle. According to the Women’s International Center website, some women in colonial America held jobs, but most did not. The majority of working women were seamstresses or kept boardinghouses at that time, and a select few were lawyers, preachers, teachers, writers, doctors, and singers.

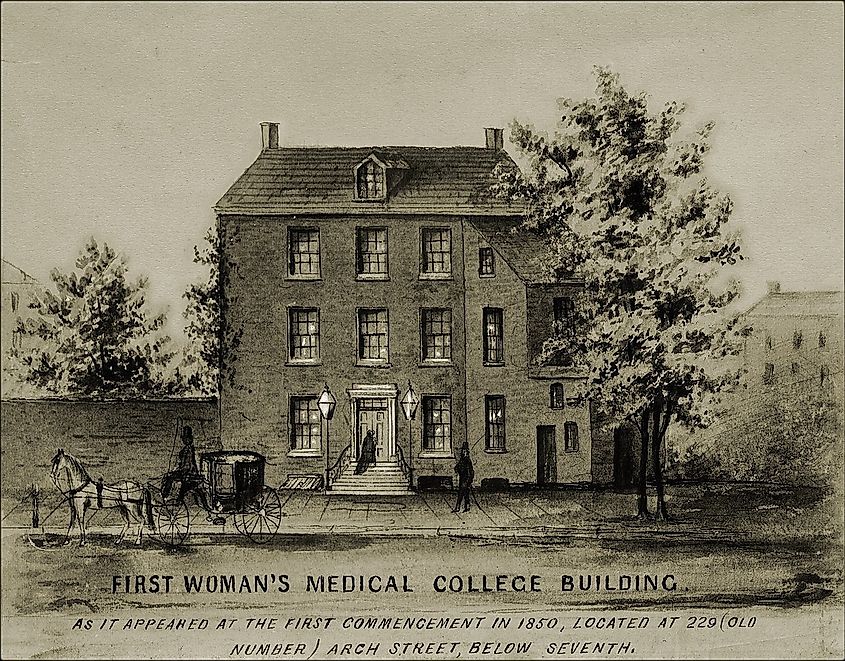

As time went on, women began to show up in factories and as domestic workers. By and large, however, women were excluded from professional positions, and when medical schools began to appear at the beginning of the 19th century for example, women were banned from attending them. This spurred females to open their own medical learning establishments, such as the Women's Medical College of Pennsylvania, and slowly the number of female doctors began to rise again. By 1890, however, just 5% of doctors in the US were women, and come 1930, just 2% of all American lawyers and judges were female, and no female engineers existed formally in the country.

Much has changed for women in the US, but it has taken time. Higher education has not always been accessible to women. Yale University, for example, did not start accepting female students until 1969. (Harvard did so in 1946). Today, women still face the hurdle of having a baby, and not being granted maternity leave. Federal law states that a full-time worker is entitled to time off when she has a child, and that she has the right to return to the same job once her leave is done, but there is still no federal law that mandates maternity benefits. Some employers choose not to pay them. In other countries, in contrast, companies are required by law to grant 12 weeks of maternity leave with full pay, including Mexico, India, Germany, Australia, and Brazil.

Top Fields That Employee Women

Certain fields of work are still dominated by men in the US. Men are overrepresented in auto mechanics, and in fields like construction. According to US Census data from 2000 and 2016, women, on the other hand now dominate certain jobs including being a public relations specialist, veterinarian, social and community service manager, production clerk, parking enforcement, technical writer, hotel manager, compliance officer, fabric and apparel patternmaker, and an optician.

Women have also moved forward on the education front. In 2016, more women than men earned a bachelor’s, master’s or doctorate degree. Catalyst.org states that 57.3% of bachelor’s degrees were earned by women, along with 59.4% of master’s degrees, and 53.3% of all doctoral degrees in the country. This continued growth in education should correlate with a continued increase of women in the workplace as time goes on.

Future Work

Wage inequality is still an issue in the US. Women earned a median salary of $45,097 annually in 2018 in the country, but men earned more with a median salary of $55,291. Women still earn about $0.81 for every dollar men earn in the country. This inequality is, in fact, a global trend. According to an index released by the World Bank in 2019, just six countries in the world grant women the same work rights as men. This applies to everything from applying for a job, to having a baby, and receiving a pension. Only Belgium, Latvia, Sweden, Luxembourg, France, and Denmark currently have laws in place that protect women’s work rights and ensure they are the same as men’s. Obviously, women in the US need to continue to fight for these rights, in order to achieve true equality in the workplace. Many advancements have been attained over the years, and so no doubt, this will be possible too, someday, with time. For the moment, women can celebrate their victories, and continue to look towards the future.